A few days back, we shipped NXTP, our protocol for enabling fully trustless transfers and contract calls between Ethereum-compatible domains (chains & L2s).

This blog post will attempt to explain why interoperability in the Ethereum ecosystem is difficult, and from there show why we think NXTP represents the beginning of a true long-term solution for the ecosystem.

The Need for Trustless Ethereum Interoperability

Multichain/L2 Ethereum is here, and it’s here to stay. Protocols and applications have all shifted their strategy towards migrating to multiple domains. Their users are now having to contend with the challenges of moving between layers or to other L1 systems/sidechains every day. This has spurred the creation of dozens of new bridges and interoperability protocols as projects have scrambled to enable this functionality for DeFi.

As can be expected, this has also brought on a number of high profile hacks and scams:

Despite these examples, every bridging system out there markets itself as trustless, secure, and decentralized (even if that’s not at all the case). This means big challenge for developers and users is now, “how can I figure out which bridging mechanisms are actually cryptoeconomically secure?”

In other words, how can users discern between types of bridges to determine who they are trusting when moving funds between chains?

What Does “Trustless” Actually Mean in Cryptoeconomics?

In the research community, when we talk about cryptoeconomic security and the property of trustlessness, we are really asking one very specific question:

Who is verifying the system and how much does it cost to corrupt them?

If our goal is to build truly decentralized, uncensorable public goods, then we have to consider that our systems could be attacked by incredibly powerful adversaries like rogue sovereign nations, megacorporations, or megalomaniacal evil geniuses.

Maximizing security means maximizing the number and diversity of verifiers (validators, miners, etc.) in your system, and this typically means trying your best to have a system that is verified entirely by Ethereum’s validator set. This is the core idea behind L2 and Ethereum’s approach to scalability.

Aside: Most people don’t realize this, but scalability research is interoperability research. We’ve known we can scale by moving to multiple domains for ages, the problem has always been how to enable communicating to those domains trustlessly. That’s why John Adler’s seminal paper on optimistic rollupsis titled, “Trustless Two-Way Bridges With Sidechains By Halting”.

What Happens If We Add New Verifiers Between Domains?

Let’s take what we’ve learned above about cryptoeconomic security and apply it to bridges.

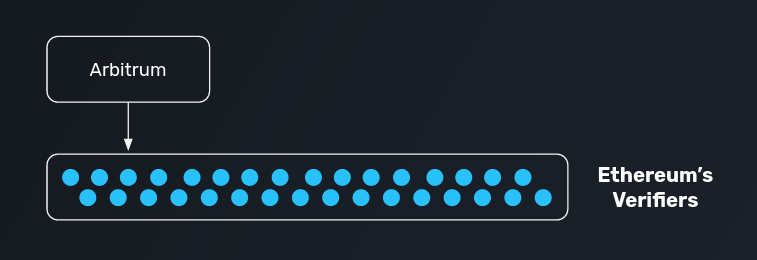

Consider a scenario where you have your funds on Arbitrum. You’ve specifically chosen to use this domain because it is a rollup, meaning that (with some reasonable assumptions) your funds are fully secured by Ethereum’s underlying verifiers. In other words, your funds are about as cryptoeconomically secure as they can possibly be in the blockchain ecosystem.

Now imagine that you decide to use a bridge to move your funds cheaply and quickly to Optimism. Optimism is also trustless, so you feel comfortable having your funds there knowing that they will share the same level of security (Ethereum’s security) that they do on Arbitrum.

However, the bridge protocol that you use utilizes it’s own set of external verifiers. While this may not initially seem like a big deal, your funds are now no longer secured by Ethereum, but instead by the verifiers of the bridge:

If this is a lock/mint bridge, creating wrapped assets, then the bridge verifiers can now unilaterally collude to steal all of your funds.

If this is a bridge that uses liquidity pools, the bridge verifiers can similarly collude to steal all of the pool capital from LPs.

Despite having waited years for secure, trustless L2s, your situation is now the same as if you had used a trusted sidechain or L1 construction. 😱

The key takeaway is that cryptoeconomic systems are only as secure as their weakest link. When you use insecure bridges, it doesn’t matter how secure your chain or L2 is anymore. And, similar to the security of L1s and L2s, it all comes entirely down to one question: who is verifying the system?

A Taxonomy of Interoperability Protocols

We can break down all interoperability protocols into three overarching types based on who verifies them:

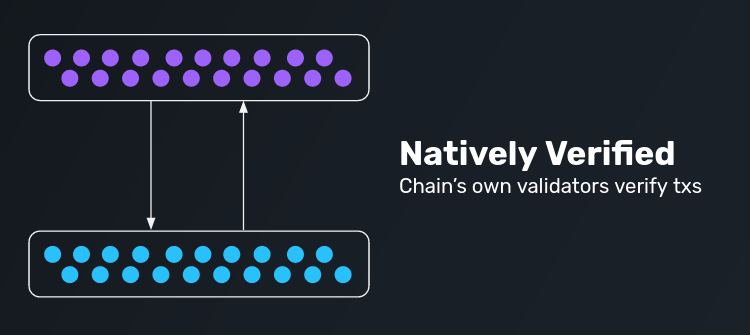

Natively Verified

Natively verified protocols are ones where all of the underlying chains’ own verifiers are fully validating data passing between chains. Typically this is done by running a light client of one chain in the VM of another chain and vice versa.

Examples include Cosmos IBC, and Near RainbowBridge. Rollup entry/exits are a special form of this as well!

Advantages:

Most trustless form of interoperability because the underlying verifiers are directly responsible for bridging.

Enables fully generalized message passing between domains.

Disadvantages:

Relies on the underlying trust and/or consensus mechanisms of the domain to function, so it must be custom builtfor each type of domain.

The Ethereum ecosystem is highly heterogenous: we have domains that are everything from zk/optimistic rollups to sidechains to base chains which run a large variety of consensus algorithms: ETH-PoW, Nakamoto-PoW, Tendermint-PoS, Snowball-PoS, PoA, and many others. Each of these domains requires a unique strategy for implementing a natively verified interoperability system.

Externally Verified

Externally verified protocols are ones where an external set of verifiers is used to relay data between chains. This is typically represented as a MPC system, oracle network, or threshold multisig (all of these are effectively the same thing).

Examples include Thorchain, Anyswap, Biconomy, Synapse, PolyNetwork, EvoDeFi, and many many many others.

Advantages:

Allows for fully generalized message passing between domains.

Can easily be extended to any domain in the Ethereum ecosystem.

Disadvantages:

Users and/or LPs fully trust the external verifiers with their funds/data. This means the model is fundamentally less cryptoeconomically secure than the underlying domains (similar to our Arbitrum to Optimism example above).

In some cases, projects will use additional staking or bonding mechanisms to try to add security for users. However, this typically does not make a lot of economic sense. In order for the system to be trustless, users have to be insured up to the maximum ruggable amount, and that insurance must come from the verifiers themselves. This not only significantly increases the capital required in the system, but also defeats the whole purpose of having minted assets or liquidity pools in the first place.

Locally Verified

Locally verified protocols are ones where only the parties involved in a given cross-domain interaction verify the interaction. Locally verified protocols turn the complex n-party verification problem into a much simpler set of 2-party interactions where each party verifies only their counterparty. This model works so long as both parties are economically adversarial — i.e. there’s no way for both parties to collude to take funds from the broader chain.

Examples include Connext, Hop, Celer, and other simple atomic swap systems.

Advantages:

Locally verified systems are trustless — their security is backed by the underlying chain given some reasonable guarantees shared by rollups (e.g. the chain cannot be censored for more than X days).

They are also very easy to extend to other domains.

Note: not every locally verified system is trustless. Some take trust tradeoffs to improve UX or add extra functionality.

For example, Hop adds some trust assumptions through their need for a fast arbitrary-messaging-bridge (AMB) in their system: the protocol unlocks bonder liquidity in 1 day rather than waiting a full 7 days when exiting rollups. The protocol also needs to rely on an externally verified bridge if no AMB exists for a given domain.

Disadvantages:

Locally verified systems cannot support generalized data passing between chains.

What the above means is a bit nuanced and comes down to permissioning: it is possible for a locally verified system to enable cross-domain contract calls but only if the function being called has some form of logical owner. For example, it is possible to trustlessly call a Uniswap swap function across chains because the swap function can be called by anyone who has swappable tokens. However, it is not possible to trustlessly lock-and-mint an NFT across chains — this is because the logical owner of the mint function on the destination chain should be the lock contract on the source chain, and this is not possible to represent in a locally verified system.

The Interoperability Trilemma

Now we get to the thesis of this article, and the mental model that should drive user and developer decisions around bridge selection.

Similar to the Scalability Trilemma, there exists an Interoperability Trilemma in the Ethereum ecosystem. Interop protocols can only have two of the following three properties:

Trustlessness: having equivalent security to the underlying domains.

Extensibility: able to be supported on any domain.

Generalizeability: capable of handling arbitrary cross-domain data.

How Do Connext and NXTP Fit Into This?

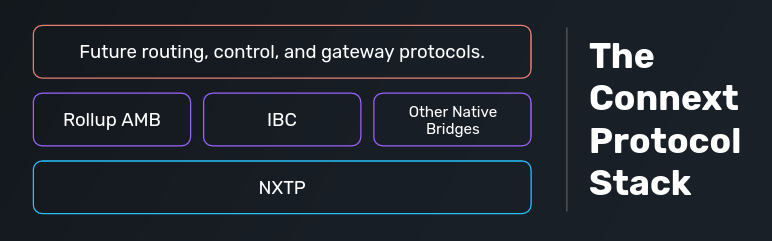

There’s no easy way for us to get all three desireable interoperability properties. We’ve realized, however, that we can take the same approach to solving the Interoperability Trilemma that Ethereum does to solving the Scalability Trilemma.

Ethereum L1 optimizes for security and decentralization at the cost of scalability. The rationale behind this is that these properties are likely the most important for the longevity and utility of a blockchain. Ethereum then adds scalability via L2/sharding as a layer on top of an existing secure and decentralized backbone.

At Connext, we strongly believe that the interoperability system with the most longevity, utility, and adoptability in the Ethereum ecosystem will be one that is maximally trustless and extensible. For this reason, NXTP is a locally verified system specifically designed to be as secure as the underlying domains while still being usable on any domain.

So what about generalizeability? Similar to scalability in the Ethereum ecosystem, we can add generalizeability by plugging in natively verified protocols on top of NXTP (as a “Layer 2” of our interop network!). That way, users and developers get a consistent interface across any domain, and can “upgrade” their connection to be generalized in cases where that functionality is available.

This is why we say NXTP is the base protocol of our interoperability network. The full network will be made of up of a stack of protocols which will include NXTP, generalized crosschain bridges specific to a pair of domains, and protocols to connect them all together into one seamless system. 🌐

Huge thanks to James Prestwich, Eli Krenzke, Dmitriy Berenzon, and in general the broader L2 research community for the many conversations that contributed to the ideas in this post over the past couple of years, as well as for proofreading my silly typos.

😄Want to Get Involved? Try out Connext at https://xpollinate.io!

We’re hiring! Check out our job postings if you’re interested in joining the core team.Join our discord chat — we have a super active community that would love to meet you!

If you want to use Connext as part of your project, check out our docs and/or reach out to us on our discord above.

Connext is fully open source, so community members are always welcome and encouraged to contribute to the protocol!